Cum să reușești cu tranzacționarea cu opțiuni binare online 2023

Binaryoptions.com oferă cea mai bună educație despre tranzacționare, cu mai mult de 10 ani de experiență în tranzacționare online, vă vom ajuta:

- Evitarea fraudei asupra semnalelor de tranzacționare și roboților

- Strategii

- Ghiduri pentru incepatori

- Schimb de cunoștințe de la experți

- Cei mai buni brokeri pentru tranzacționare

- Recenzii și sfaturi ale platformei de tranzacționare

Ce sunt Opțiunile Binare? - Definiție

O opțiune binară este un produs financiar cunoscut sub numele de opțiunea „Totul sau nimic”, în care rezultatul se bazează pe două opțiuni diferite. Puteți câștiga o rentabilitate mare sau puteți pierde suma investiției. Este o opțiune simplă „da sau nu”, motiv pentru care se numește „binară”.

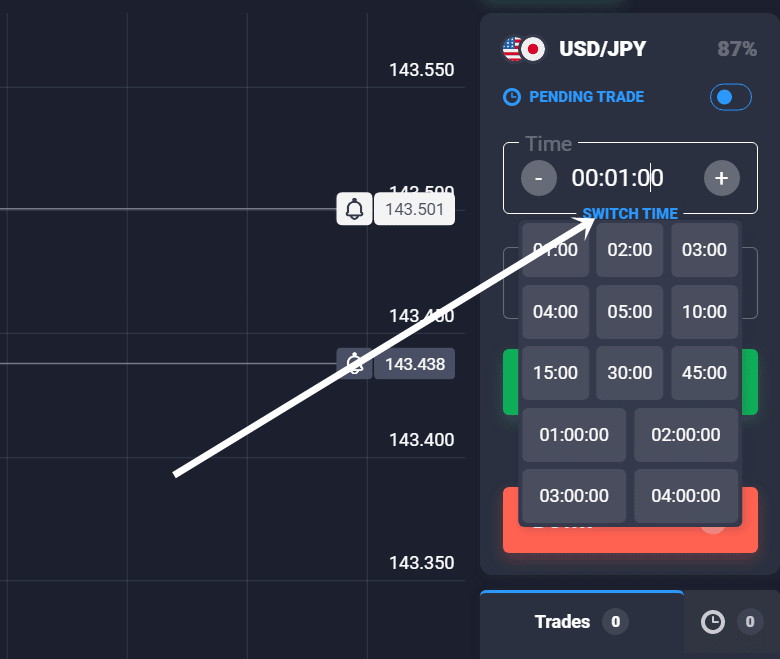

Sugerați că un preț curent la bursă va crește sau va scădea într-un timp de expirare. Dacă aveți dreptate într-un anumit interval de timp, câștigați o sumă monetară care este fixată de broker. Comerciantul poate alege intervalul de timp (timpul de expirare) pe platforma brokerului. Este posibil să tranzacționați opțiuni de la o durată de 30 de secunde până la o durată de 2 luni sau chiar mai mult. Contează doar dacă prețul este mai mare sau mai mic decât prețul de exercițiu atunci când expiră termenul.

Fapte cheie:

- O opțiune binară are doar un rezultat câștig sau pierde.

- Comercianții pot câștiga un randament ridicat în funcție de oferta brokerului.

- O opțiune binară expiră după un timp de expirare fix și arată rezultatul imediat după.

- Riscul este limitat datorită întregii sume a investiției.

- Opțiunile binare sunt reglementate în SUA, dar sunt tranzacționate în principal offshore în alte țări.

Încercați funcționalitatea Opțiunilor binare aici:

Opțiunile binare sunt oferite de brokerii OTC (over-the-counter) care potrivesc ordinele între diferiți comercianți.

Suma investiției poate fi de până la $1 sau de până la $1.000. Acest lucru depinde de platforma pe care tranzacționați.

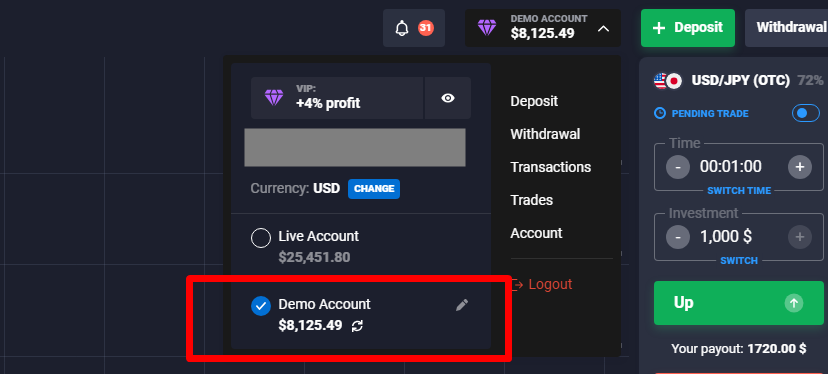

Chiar dacă sunteți începător în tranzacționarea binară, este recomandat să începeți cu un cont demo gratuit. Aceasta înseamnă că tranzacționați cu bani virtuali și nu riscați bani reali pe piețe.

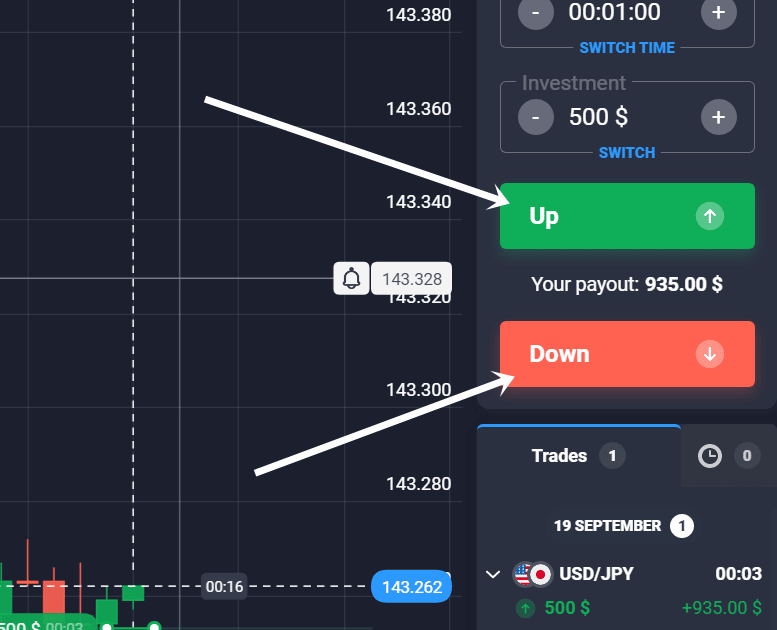

Exemplu de tranzacționare:

Pentru a începe tranzacționarea, urmați acești pași:

- Alegeți activul pe care doriți să îl tranzacționați

- Faceți o prognoză a mișcării viitoare a prețurilor (în sus sau în jos)

- Alegeți timpul de expirare a opțiunii

- Alegeți suma investiției pentru tranzacție (începe de la $1)

- Începeți tranzacția și intrați pe piață la un preț de exercițiu.

- Așteptați până când timpul de expirare se termină și opțiunea binară expiră

- Prețul trebuie să fie mai mare sau sub prețul de exercițiu (în funcție de direcția tranzacției)

- Obțineți un profit de până la 100% sau pierdeți suma investiției

Mai mult, citește-ne ghid complet despre cum să tranzacționați!

Despre noi – Binaryoptions.com

Opțiunile binare par ca a investiții cu risc ridicat care este binecunoscut de începători și chiar de comercianții profesioniști. Este o formă de pariuri pe piețe pentru a obține profit sau pierdere.

De peste 10 ani, am tranzacționat și am iubit acest instrument financiar deoarece este o modalitate foarte bună de a face bani într-un interval de timp scurt. Construcția produsului financiar ne permite utilizați strategii speciale, pe care vi le arătăm pe site-ul nostru.

Suntem comercianți cu experiență, analiști și scriitori de conținut care doresc să ajutăm publicul să înțeleagă cum să tranzacționeze cu mai mult succes. Există atât de multe știri false și false despre asta pe internet. Cu pagina noastră, dorim să spunem „NU” pierderilor, înșelătoriilor și informațiilor false în tranzacționarea cu opțiuni binare.

Aflați mai multe din experiențele și greșelile noastre din trecut. Vă vom arăta în mod transparent cum să aveți mai mult succes în tranzacționarea binară.

De ce poți avea încredere în noi

Cum ne asigurăm că cititorii noștri obțin informații corecte și cele mai bune cunoștințe? – Cu echipa noastră editorială, lucrăm prin utilizarea strictă ghiduri editoriale. Toate articolele noastre publicate sunt verificate dublu și dovedite de către experți reali în tranzacționare. Pentru a revizui și a compara brokerii, urmărim metodologia de revizuire. Platformele sunt verificate după aceleași criterii, care sunt importante pentru a avea o experiență de tranzacționare sigură pentru cititorii noștri.

Lucrăm împreună doar cu mărci de încredere care sunt mulți ani în industrie. Brokerii sunt testați cu banii noștri în condiții reale de piață. Cu toate acestea, nu putem preveni înșelătoriile 100%, dar scorul nostru de încredere vă poate ajuta cu siguranță. Examinăm fiecare broker pentru caracterul de încredere și securitate. În plus, verificăm autoritățile lor de reglementare și detaliile oficiale ale companiei pentru a ne asigura că recomandăm cele mai bune oferte cititorilor noștri.

Brokeri de top – începeți să tranzacționați aici:

The broker de opțiuni binare este intermediarul dintre comerciant și piețele financiare. Aceste platforme vă permit să mergeți scurt sau lung pe piețe diferite. Unele dintre ele oferă 100 de active pentru tranzacționare; altele oferă peste 500 de active pentru tranzacționare.

Există o mare varietate de brokeri și platforme pe internet; pe binaryoptions.com, le-am comparat pe cele mai bune între ele. Acestea vă permit să începeți tranzacționarea cu un depozit minim mic de $ 10 și o sumă minimă de tranzacționare de $ 1. Profiturile maxime nu sunt limitate.

Peste 100 de piețe

- Acceptă clienți internaționali

- Plăți mari 95%+

- Platformă profesională

- Depuneri rapide

- Social Trading

- Bonusuri gratuite

Peste 100 de piețe

- Min. depozit $10

- $10.000 demonstrație

- Platformă profesională

- Profit mare de până la 95%

- Retrageri rapide

- Semnale

Peste 300 de piețe

- $10 depozit minim

- Cont demo gratuit

- Randament ridicat până la 100% (în cazul unei predicții corecte)

- Platforma este ușor de utilizat

- Asistență 24/7

(Avertisment de risc: capitalul dvs. ar putea fi în pericol)

Peste 100 de piețe

- Acceptă clienți internaționali

- Plăți mari 95%+

- Platformă profesională

- Depuneri rapide

- Social Trading

- Bonusuri gratuite

din $50

(Avertisment de risc: tranzacționarea este riscantă)

Peste 100 de piețe

- Min. depozit $10

- $10.000 demonstrație

- Platformă profesională

- Profit mare de până la 95%

- Retrageri rapide

- Semnale

din $10

(Avertisment de risc: tranzacționarea este riscantă)

Peste 300 de piețe

- $10 depozit minim

- Cont demo gratuit

- Randament ridicat până la 100% (în cazul unei predicții corecte)

- Platforma este ușor de utilizat

- Asistență 24/7

din $10

(Avertisment de risc: capitalul dvs. ar putea fi în pericol)

Înainte de a începe, încercați contul demo

A cont demo este un cont special de tranzacționare cu bani virtuali. Asta înseamnă că soldul contului nu este umplut cu bani reali. Toate fondurile virtuale pot fi pierdute fără riscuri pentru banii tăi. Acest cont este cea mai bună opțiune pentru a începe tranzacționarea cu binare. Vă recomandăm să începeți cu un cont demo și să nu riscați mai întâi proprii bani. Tranzacționarea binară pare uneori foarte ușoară, dar adevărul este că majoritatea comercianților își pierd banii.

Dobândirea experienței, dezvolta o strategie de tranzactionare, aflați mai multe despre diferite piețe și obțineți cunoștințe despre platformele de tranzacționare, ar trebui să utilizați mai întâi contul demo pentru siguranța dvs. Dacă te simți confortabil și ai făcut niște profituri cu bani virtuali, poți începe să tranzacționezi conturi reale.

(Avertisment de risc: capitalul dumneavoastră poate fi în pericol)

Cei mai importanți termeni:

Ca începător, nu este ușor să înțelegi opțiunile binare în primele 5 minute. Asigurați-vă că aveți suficient timp pentru a citi acești termeni importanți și a-i înțelege. Este nivelul cheie pentru succesul tău. Dacă nu înțelegeți produsul financiar și caracteristicile acestuia, s-ar putea să vă pierdeți toți banii. În secțiunea următoare, vă vom arăta cei mai importanți termeni și vă vom explica.

- Activ de bază – Este piața pe care tranzacționați binare.

- Timp de expirare – Veți primi rezultatul final când tranzacția expiră.

- Pretul de exercitare – Prețul la care ați început să cumpărați sau să vindeți. Prețul trebuie să fie peste sau mai mic pentru a obține profit.

- Sumă fixă a profitului – Posibilul randament pe care îl puteți scoate din comerț

- Optiune – Investești în creșterea prețurilor

- Opțiune Put – Investești în scăderea prețurilor

Activul de bază al unei opțiuni binare

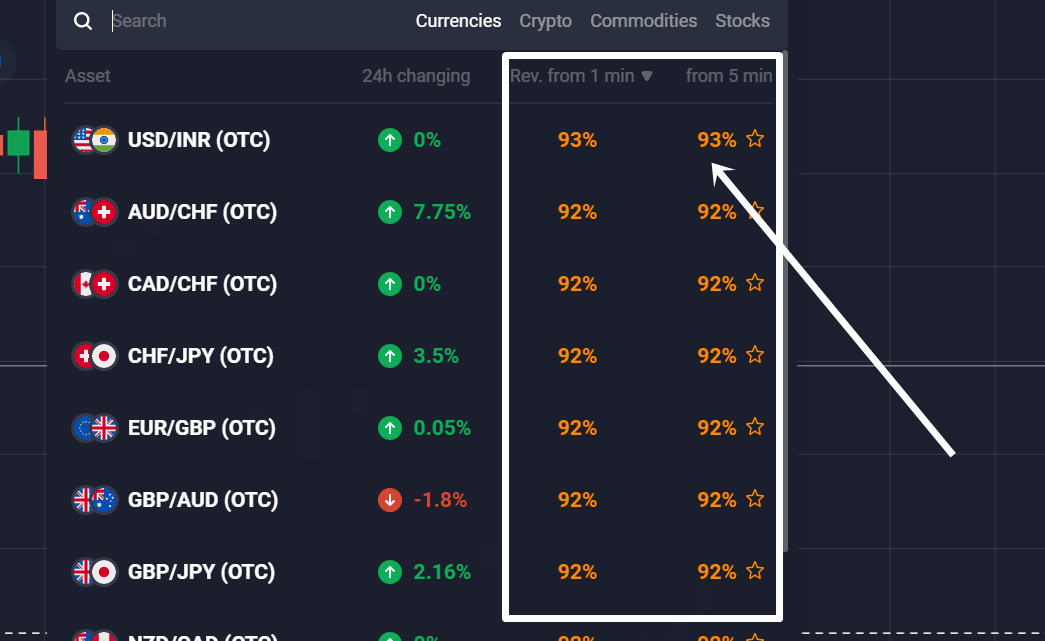

Piața de bază poate fi acțiuni, mărfuri, criptomonede, forex sau ETF-uri. În funcție de broker, activele sunt oferite. Tranzacția doar cumpără sau vinde un contract de opțiuni asupra acestor active suport. Nu este o investiție reală în activ, cum ar fi cumpărarea de aur de la un comerciant cu amănuntul. Doar tranzacționați contractele de opțiuni.

Timpul de expirare a unei opțiuni

Opțiunea binară se închide întotdeauna la un timp de expirare fix. De exemplu, puteți tranzacționa 30 de secunde, 60 de secunde sau chiar 1 lună. Depinde de brokerul pe care îl alegeți și de datele de expirare disponibile. Dacă este atinsă data de expirare, prețul activului suport trebuie să fie peste sau sub prețul țintă.

Preț țintă/preț de exercitare

Prețul țintă este punctul de intrare de bază sau prețul de exercitare. Dacă începeți să cumpărați sau să vindeți o opțiune binară, prețul de exercitare este prețul curent al pieței. Așa că este foarte important să ai un timp bun de partea ta. Chiar dacă ratați prețul țintă cu 0,1 puncte, vă puteți pierde întreaga investiție. Dar, pe de altă parte, poți câștiga o sumă mare de profit dacă ai dreptate. Poate te intrebi: Pot avea două prețuri ținte? – Răspunsul este simplu: acest lucru nu este posibil.

Sumă fixă a profitului

O opțiune binară are o sumă fixă de profit, care este fixată de broker. Plata fixă poate fi 60%, 70% sau chiar 90% din suma investiției dvs. Dar rețineți că vă puteți pierde întreaga investiție dacă luați decizii greșite de tranzacționare. Există doar două rezultate: pierzi sau câștigi. Plata fixă depinde și de piața subiacentă pe care o tranzacționați și de timpul de expirare. Uneori, există trei tipuri de rezultate ale unei tranzacții cu opțiuni binare: pierzi, câștigi sau primești banii înapoi atunci când piața atinge exact prețul de exercitare.

Opțiune call și opțiune put

Opțiuni binare este un produs de tranzacționare simplu cu risc limitat. Există doar două moduri de a tranzacționa: aveți opțiuni de call și opțiuni de vânzare. Opțiunea de apel înseamnă că spuneți că o piață de opțiuni binare va crește peste un anumit preț într-un timp limitat de expirare. O opțiune put înseamnă că spuneți că o piață va scădea sub un anumit preț într-un timp limitat de expirare.

Pentru a calcula profitul, puteți utiliza instrumentul nostru intern, calculatorul de profit:

Folosind instrumentul de mai jos, calculați profitul sau pierderea în tranzacționarea cu opțiuni binare. Introduceți sumele de investiție, randamentul brokerului și valoarea tranzacțiilor pierdute și câștigătoare. Apoi vedeți rezultatul global al tranzacționării dvs. Pentru mai multe informații, ne puteți vizita pagina calculatorului de profit.

(Avertisment de risc: capitalul dumneavoastră poate fi în pericol)

Avantajele și dezavantajele opțiunilor binare:

Avantaje:

- Limitați riscurile

- Profit mare disponibil

- Tranzacționare pe termen scurt și lung

- Ușor de înțeles

- Platforme profesionale disponibile

- Poate fi folosit pentru acoperire

- Poate fi folosit pe orice piata financiara

Dezavantaje:

- Poate deveni dependentă

- Există niște brokeraje proaste acolo

- Nu este disponibil în fiecare țară

(Avertisment de risc: capitalul dumneavoastră poate fi în pericol)

Opțiunile binare sunt legale sau nu?

Mulți comercianți întreabă dacă Opțiunile binare sunt legale sau nu. Această întrebare este necesară atunci când vorbim despre tranzacționare online reglementată și sigură. În trecut, au existat o mulțime de escroci în industrie. Multe autorități de reglementare au avertizat despre aceste probleme și încep să reglementeze și mai mult produsul financiar. În zilele noastre este important să folosiți o platformă de tranzacționare care are supraveghere reglementară de către o autoritate.

Opțiunile binare sunt pe deplin legale pentru tranzacționarea în 99% de țări. Există câteva excepții pentru investitorii de retail:

- Uniunea Europeană: nu au voie să fie vândute comercianților cu amănuntul

- Canada: complet interzis

- Israel: complet interzis

- Australia: Nu este permis comercianților cu amănuntul

Opțiunile binare sunt legale pentru tranzacționare:

Produsul financiar este legal pentru tranzacționare pentru investitori și comercianți cu amănuntul. Chiar și comercianții profesioniști pot tranzacționa opțiuni binare. Un comerciant se poate înscrie la un broker potrivit și poate începe tranzacționarea binară. Unii dintre brokeri nu sunt reglementați. Prin urmare, ar trebui să fiți atenți și să verificați cu autoritatea de reglementare dacă puteți tranzacționa acolo. De cele mai multe ori este legal să deschideți un cont de tranzacționare.

Este interzis in Europa?

În Uniunea Europeană, este permisă vânzarea de servicii de opțiuni binare doar către comercianți profesioniști. Asta înseamnă că brokerii din Europa pot accepta doar comercianți profesioniști. Pentru a fi un comerciant profesionist, veți avea nevoie de mai mult de 500.000 EUR, un volum mare de tranzacționare sau educație financiară. Dacă aplicați pentru 2 dintre aceste puncte, puteți tranzacționa ca comerciant profesionist în Europa. Mai mult, puteți tranzacționa cu un broker din afara Europei, dar acest lucru nu este reglementat. Cele mai multe platforme au fost legate de Autoritatea de reglementare din Cipru CySEC în perioada 2010 – 2018.

Este legal in SUA?

Opțiuni binare este un produs financiar oficial în Statele Unite ale Americii. Cetățenilor americani li se permite să facă comerț, dar trebuie să fie cu un broker reglementat verificat de un organism de reglementare din SUA, cum ar fi CFTC (comision de tranzacționare la termene pe mărfuri).

Dar acordați atenție brokerilor nereglementați. The FINRA (Autoritatea de Reglementare a Industriei Financiare) a avertizat deja despre entitățile nereglementate care oferă servicii comercianților din SUA. Dacă nu știți despre statutul brokerului dvs., puteți utiliza pur și simplu verificarea brokerului FINRA: https://brokercheck.finra.org/

Cele mai importante autorități de reglementare din SUA:

- CFTC – Commodity Futures Trading Commission

- FINRA – Commodity Futures Trading Commission

- SEC – Securities and Exchange Commission

- NFA – National Futures Association

Tranzacționarea opțiunilor binare este disponibilă în SUA prin intermediul Bursa de derivate din America de Nord (NADEX). Este una dintre platformele de tranzacționare reglementate. Puteți cumpăra sau vinde cu câteva clicuri acolo.

Reglementări ale Opțiunilor Binare

În zilele noastre, există doar o câțiva brokeri de opțiuni binare reglementate. Cele mai multe dintre ele sunt nereglementate. În diferite țări, există reglementări diferite. Înainte de a vă înscrie la un broker, ar trebui să verificați statutul reglementărilor din țara dvs. Din experiența noastră, majoritatea brokerilor acceptă clienți din 90% de țări. De asemenea, puteți verifica pe site-ul web al brokerului dacă brokerul lucrează în țara dvs. Mulți brokeri blochează clienții dacă nu este permis să tranzacționeze în țara lor.

Platforme și brokeri de tranzacționare cu opțiuni binare

Dacă începeți tranzacționarea cu opțiuni binare, este posibil să găsiți o mulțime de platforme de tranzacționare pe internet. Dar pe care ar trebui să o alegi pentru investițiile tale? Un broker vă oferă să tranzacționați instrumente financiare pe baza activelor subiacente. Brokerul este intermediarul dintre piețele financiare și comerciant. Pentru comercianții cu amănuntul, sunt oferite aplicații de tranzacționare, platforme de tranzacționare, software și diagrame live.

Următoarele puncte cheie vă vor ajuta să alegeți cel mai potrivit broker pentru dvs. Pune aceste întrebări înainte de a alege o companie de tranzacționare cu opțiuni binare:

- Este platforma de tranzacționare reglementată?

- Este platforma de tranzacționare legală în țara mea?

- Brokerul își oferă serviciile în țara mea?

- Pot folosi un cont binar virtual pentru a testa aplicația de tranzacționare?

- Cât de mare este depozitul minim

- Există taxe ascunse?

- Cât de mari sunt comisioanele pentru depuneri și retrageri?

- Este software-ul de tranzacționare potrivit pentru analiza mea?

- Câte active sunt disponibile pentru tranzacționare?

- Cât de mare este rentabilitatea investiției în perioadele principale de tranzacționare?

- Oferă brokerul asistență în timp real?

După cum vedeți, sunt multe întrebări de pus înainte de a alege un broker. În comparația brokerului nostru de opțiuni binare, vă vom arăta recomandările noastre.

Care sunt metodele brokerilor binari înșelători?

O firmă de tranzacționare frauduloasă a opțiunilor binare nu corespunde niciunei cerințe de reglementare. Asta nu înseamnă că platformele de tranzacționare nereglementate sunt proaste, dar de cele mai multe ori este foarte riscant să începeți tranzacționarea cu ele. Dacă începi călătoria investiției și ești înșelat de companii, următoarele metode sunt comune:

- Respinge cereri: Brokerul vă respinge solicitarea de a deschide sau închide o tranzacție. Acest lucru poate fi foarte dureros pe piețele volatile de opțiuni binare.

- Fără returnare a fondurilor: The broker binar de escrocherie nu returnează fonduri în contul dvs. bancar sau în metodele de plată.

- Grafice manipulate în timp real: Brokerul fraudulos vă spune că are cele mai bune diagrame și execuție pentru tranzacții, dar este manipulat.

- Manageri de conturi falși: Brokerii vă vor spune că au o echipă de experți care vă poate ajuta să câștigați bani. Toți acești oameni vor doar banii tăi și nu au sfaturi bune pentru investiția ta.

- Taxe ascunse: Brokerul vă va percepe sume uriașe de comisioane, de exemplu atunci când încercați să faceți o retragere de bani.

- Tranzacții manipulate: Brokerul binar va manipula tranzacțiile dvs. câștigătoare și le va transforma în tranzacții pierdute.

Creați o strategie repetitivă pentru succesul dvs

Dacă întrebați un comerciant profesionist cum tranzacționează piața, el vă va spune că urmează regulile și o strategie pentru a intra și a ieși din piață. Este necesară o strategie de opțiuni binare pentru a câștiga bani pe termen lung. Da, poți avea tranzacții profitabile fără un plan doar dacă ai noroc pe piață, dar pe termen lung, vei avea nevoie de o strategie adecvată care să funcționeze!

Există multe strategii de tranzacționare care funcționează pe piață; cel mai important punct aici este să executați setul de reguli ca pe o mașină. Adesea, comercianții nu tranzacționează după propriile reguli, ceea ce va duce la o pierdere. În exemplul nostru de mai sus, vedeți că am analizat piața în funcție de acțiunea prețului și graficul cu lumânare. Vedem în mod clar că piața împinge prețuri mai mari. Piața nu are minime mai scăzute, așa că vrem doar să tranzacționăm cu tendința și să creăm câteva poziții lungi aici.

Următoarele puncte ar trebui incluse într-o strategie:

- Unde este piata in acest moment?

- Care sunt ultimele mișcări ale pieței?

- Pot vedea o tendință sau o gamă?

- Unde sunt înaltele și coborâșurile?

- Văd o confirmare pentru a intra într-o tranzacție?

Pentru cunoștințe și învățare mai detaliate, vizitați pagina de strategie.

Care sunt cele mai mari riscuri pentru investitori?

Așa cum sa menționat mai înainte, tranzacționarea opțiunilor binare este foarte riscantă. Începătorii sunt adesea impresionați de videoclipurile de pe youtube în care comercianții câștigă mii de dolari în câteva secunde. Ceea ce nu văd este că acești comercianți care apar pe youtube sau pe orice alte platforme au experiență și știu ce fac. Le poți copia strategiile de tranzacționare, dar nu vei ajunge să faci bani din cauza lipsei de experiență pe piață.

Puteți pierde întreaga sumă de investiție în timp ce tranzacționați opțiuni binare. Acesta este riscul cel mai subestimat atunci când vedem că începătorii încep să tranzacționeze. Sună bine că puteți obține un profit de 90%+ pe site-ul brokerului. Dar dacă greșești, ai o pierdere 100%. Există întotdeauna un dezavantaj în raportul risc-recompensă al opțiunilor binare pentru investitor. Deci, aveți nevoie de mai mult de 55% – 60% sau chiar 70% tranzacții câștigătoare pentru a face bani constant.

Vezi tabelul de mai jos:

| Randamentul mediu al unei opțiuni binare: | Minim de tranzacții câștigătoare pentru a face bani (puțin peste pragul de rentabilitate): | Rata de castig: |

|---|---|---|

| 90% | minim 53 de câștigători din 100 | 53%+ |

| 80% | minim 56 din 100 | 56%+ |

| 70% | minim 59 din 100 | 59%+ |

| 60% | minim 63 din 100 | 63%+ |

După cum vedeți în calcul, veți avea nevoie de cel puțin o rată de câștig de 53% – 56% pentru a atinge rentabilitatea (măsurată cu un randament mediu de 80% – 90%). Pentru a câștiga mai mulți bani, aveți nevoie de o rată de câștig de cel puțin 60% – 70%. Există diferiți factori care vă influențează întoarcerea:

- Rata de rentabilitate a activului suport

- Rata de câștig a tranzacțiilor dvs

- Folosești sau nu strategia de martingale pentru opțiuni binare

Risc ridicat pentru sistemele de martingale sau strategiile binare duble

Mulți începători folosesc a sistem de martingale sau strategie de dublare pentru a recupera pierderile. Ideea este simplă și își are istoria în scena jocurilor de noroc. Dacă pierzi un pariu, doar dublează valoarea investiției. Când tranzacționați, trebuie să investiți mai mulți bani decât dublați pentru a recupera toate pierderile. Calculele de mai jos arată exemplele:

Strategia de dublare cu o dimensiune a contului de $ 10.000 și managementul riscului de 1%. După 5 tranzacții pierdute, contul tău este în faliment și nu poți continua această strategie:

| Dimensiunea contului: | Pierderea tranzacțiilor: | Investiție (2x) | Profit (medie 80%): | Profit net (dacă câștigați): |

|---|---|---|---|---|

| $ 9,900 | 1. | $ 100 | $ 80 | $ 80 |

| $ 9,700 | 2. | $ 200 | $ 160 | $ 60 |

| $ 9,300 | 3. | $ 400 | $ 320 | $ 20 |

| $ 8,500 | 4. | $ 800 | $ 640 | – $ 60 |

| $ 6,900 | 5. | $ 1600 | $ 1280 | – $ 220 |

| $ 3,700 | 6. | § 3200 | $ 2560 | – $ 540 |

Strategia Martingale cu o dimensiune a contului de $ 10.000 și managementul riscului de 1%. După 5 tranzacții pierdute, contul tău este în faliment și nu poți continua această strategie.

| Dimensiunea contului: | Pierderea tranzacțiilor: | Investiție (2,3x) | Profit (medie 80%): | Profit net (dacă câștigați): |

|---|---|---|---|---|

| $ 9,900 | 1. | $ 100 | $ 80 | $ 80 |

| $ 9,670 | 2. | $ 230 | $ 184 | $ 84 |

| $ 9,141 | 3. | $ 529 | $ 423 | $ 93 |

| $ 7,925 | 4. | $ 1216 | $ 972 | $ 113 |

| $ 5,129 | 5. | $ 2796 | $ 2236 | $ 160 |

| – $ 1,301 | 6. | $ 6430 | $ 2144 | $ 273 |

Nu vă recomandăm să utilizați aceste strategii, deoarece vă puteți ucide rapid contul de tranzacționare! După cum vedeți mai sus, puteți face 5 tranzacții pierdute la rând și contul dvs. dispare chiar dacă începeți cu doar 1% din soldul contului. Aflați un bun management al riscului și utilizați o sumă fixă pentru investiții, cum ar fi comercianții profesioniști.

Emoții și jocuri de noroc

Un alt risc ridicat de tranzacționare binară este emoțiile și psihologia. Tranzacționarea online este uneori ca a merge la un cazinou pentru un începător. Poți să câștigi sau să pierzi! Atunci când pierd prea mulți bani sau prea multe tranzacții la rând, comercianții tind să ia decizii de tranzacționare iraționale, deoarece doresc să recupereze toate pierderile. Din experiența noastră, se poate întâmpla ca un începător să înceapă să-și omoare contul pentru că nu-i vine să creadă că a pierdut bani atât de repede. Adesea, atunci sunt tranzacționate o mulțime de tranzacții și un volum mare. Învață să accepți pierderi și continuă să folosești un plan de tranzacționare!

Soluția împotriva riscului de a pierde bani: Planul de tranzacționare

Creați un plan de tranzacționare strict în care vă gestionați tranzacțiile. Folosind un plan de tranzacționare, trebuie să luați în considerare următoarele fapte:

- Gestionarea banilor

- Intrarea în comerț

- Ieșirea comercială

- Termenul de expirare a comerțului

- Suma corectă de investiție

- Acordați atenție știrilor din piață

- Respectați strategia dvs. de tranzacționare cu opțiuni binare

Cel mai bun mod de a reduce emoțiile este să ai un plan de tranzacționare în care ai un set de reguli. Aceasta include și o strategie adecvată. De exemplu, spuneți că SMA din perioada 50 și EMA din perioada 20 se încrucișează și indicatorul RSI este supravândut/supracumpărat, apoi începem o tranzacție și investim bani. Dacă nu vedeți această configurație în grafic, nu intrați într-o tranzacție. Acesta este doar un exemplu simplu, îi puteți adăuga din ce în ce mai multe reguli.

Prezentare generală a subiectului nostru:

Cele mai puse întrebări:

Când poți folosi o opțiune binară?

Opțiunile binare pot fi utilizate pe piețe cu volatilitate ridicată sau chiar cu volatilitate scăzută. În cele mai multe cazuri, când opțiunile binare sunt folosite pentru a face bani sau pentru acoperirea unui portofoliu existent. Investitorii de retail folosesc adesea acest produs financiar pentru a obține profit pe termen scurt. Comercianții profesioniști folosesc acest produs financiar pentru a acoperi contul unui investitor.

Opțiunile binare sunt o înșelătorie?

Opțiunile binare nu sunt o înșelătorie. Este un instrument financiar reglementat oficial. Din păcate, nu este disponibil în multe țări din motive de reglementare. Alegând un broker cu experiență și bine cunoscut, sunteți pe partea potrivită pentru a investi cu opțiuni binare.

Vă puteți îmbogăți prin tranzacționarea cu opțiuni binare?

Depinde de definiția de a fi „bogat”. Puteți câștigați mulți bani cu tranzacționarea cu opțiuni binare, dar pe de altă parte, este o metodă foarte riscantă de a tranzacționa pe piață. Majoritatea comercianților subestimează riscurile atunci când intră într-o tranzacție. Veți avea nevoie de o strategie de tranzacționare adecvată și de experiență pentru a face bani.

De ce există atât de multe avertismente despre opțiunile binare?

Pe vremuri, existau o mulțime de escroci pe această piață care foloseau site-uri web false sau diagrame de preț false pentru a fura banii începătorilor. Autoritățile de reglementare au început să avertizeze despre acest lucru și chiar să interzică tranzacționarea instrumentului financiar. Este o oportunitate de investiție foarte riscantă de a alege atunci când tranzacționați opțiuni binare. Rețineți că puteți pierde toți banii investiți!

(Avertisment de risc: capitalul dumneavoastră poate fi în pericol)

Cele mai recente videoclipuri de tranzacționare pe YouTube

![Tutorial Pocket Option [ GHID COMPLET DE TRADING ] 📈 Cum să-l folosești corect pentru începători](jpg/mqdefault-12.jpg)

Opinii de la comunitatea noastră comercială

Jordan Peters

Comerciant cu opțiuni binare

Excelent site de comparație. Binaryaoptions.com mi-a arătat cel mai bun broker binar pentru investițiile mele. Tranzac acum cu 20% randamente mai mari decât cu vechiul meu broker.

Andrea Walbet

investitor în opțiuni binare

Vă mulțumim pentru informațiile minunate de pe acest site! Mi-am îmbunătățit strategiile de tranzacționare datorită dvs.

John Mueller

Comerciant cu opțiuni binare